What Is Chain of Thought Prompting: A 2026 Guide

You've probably seen this already in your own product.

A user asks a model something that looks simple on the surface, but needs two or three logical steps. The answer comes back fluent, fast, and wrong. Not obviously wrong either. Wrong in the dangerous way: confident enough to pass a quick glance, brittle enough to break a workflow, and expensive enough to create support tickets later.

That's the gap chain of thought prompting was designed to close.

If you're trying to figure out what is chain of thought prompting in practical terms, the short version is this: it's a way of asking a model to reason through intermediate steps instead of jumping straight to an answer. That sounds small. In production, it changes prompt design, latency, token usage, failure patterns, and how you should evaluate model behavior.

Table of Contents

- The 'Aha' Moment in AI Reasoning

- What Is Chain of Thought Prompting and Why Does It Work

- From Theory to Practice with CoT Prompt Examples

- Advanced CoT Techniques for Complex Problems

- The Hidden Costs and Failure Modes of CoT

- Productizing CoT Prompts Safely and Effectively

The 'Aha' Moment in AI Reasoning

A common failure looks like this.

You ask a model: “A shop sells notebooks in packs of 3. Maya buys 4 packs and gives 2 notebooks away. How many notebooks does she have left?” A direct-answer prompt can still fail on that kind of question because the model compresses the task into a guess instead of working through multiplication and subtraction in order.

Then you change one line in the prompt: “Let's think step by step.”

Suddenly the answer often gets better. The model starts by calculating total notebooks, then subtracts what Maya gave away, then returns a final answer. Nothing about the model weights changed. You changed the prompt so the model had room to process the problem as a sequence.

That's the moment many organizations realize they aren't just dealing with a text generator. They're dealing with a system whose performance depends heavily on how reasoning is elicited.

Why this felt like a breakthrough

Before CoT became mainstream, standard prompting often struggled on multistep reasoning tasks. The model could produce a polished answer, but it had no explicit scaffold for getting there. On simple retrieval or rewriting tasks, that's fine. On arithmetic, symbolic manipulation, or commonsense chains, it often isn't.

Practical rule: If the task has hidden intermediate steps, a direct prompt often hides the model's failure until it reaches your users.

The primary value of CoT isn't that it makes a model “smarter” in some mystical sense. It changes the shape of the work. Instead of forcing a one-hop answer, it encourages a path.

That's why the technique mattered so much to product teams. It gave builders a prompt-level intervention for reasoning errors. No retraining. No new infrastructure. Just a better way to ask.

What Is Chain of Thought Prompting and Why Does It Work

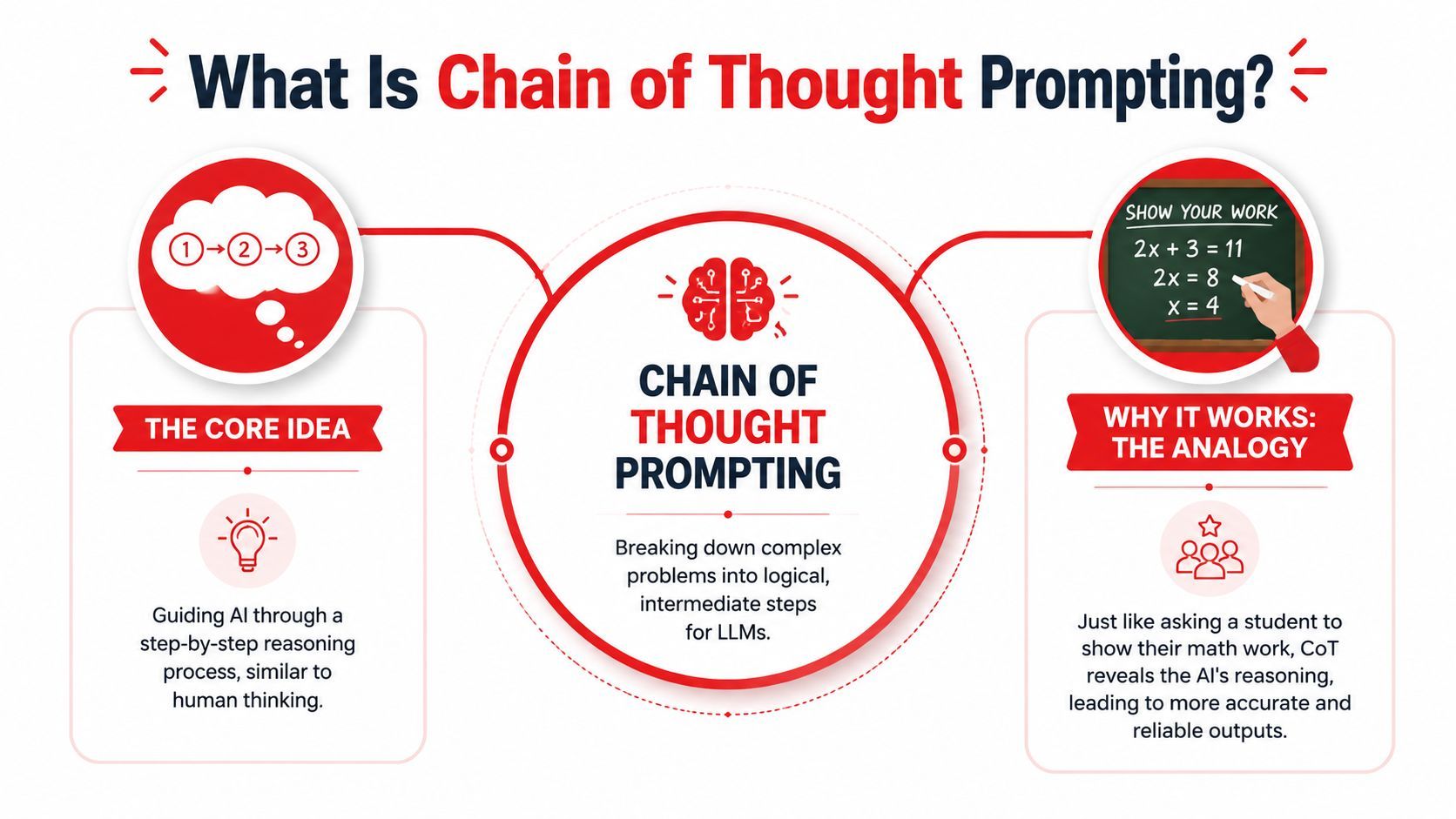

Chain of thought prompting asks a language model to produce intermediate reasoning before it gives the final answer. In practice, that means the prompt does not just ask for an outcome. It asks for the path.

That distinction matters on tasks with hidden steps. Arithmetic, rule application, planning, and multi-constraint QA often fail because the model jumps to a plausible answer too early. CoT reduces that jump. It gives the model a structure for breaking the problem into smaller moves, then completing them in order.

The version of the definition that matters in production

There are two common forms:

- Zero-shot CoT asks for reasoning without examples, often with a cue like “Let's think step by step.”

- Few-shot CoT shows worked examples so the model can copy the reasoning format as well as the answer style.

The mechanism is simple. The prompt changes the output shape from a single leap to a sequence. NVIDIA's explanation of CoT prompting describes this as establishing a reasoning pattern the model can continue on the current task https://www.nvidia.com/en-us/glossary/cot-prompting/.

That sounds theoretical. The operational takeaway is more concrete. CoT often improves answer quality on multi-step tasks because it makes the model keep track of intermediate state instead of compressing everything into one guess.

Why the 2022 paper changed prompt engineering

CoT became widely adopted after the 2022 paper by Jason Wei and collaborators showed large gains on reasoning benchmarks. In the reported results, a 540B-parameter model reached 78% accuracy with CoT versus 18% without it on arithmetic reasoning, improved from 4% to 51% on Last Letter Concatenation, and from 70% to 90% on CSQA, as summarized in Prompting Guide's coverage of the original CoT paper.

One result mattered more than the headline numbers. The gains were much stronger on larger models. That is still a useful rule for product teams. CoT is not equally effective across every model class, and small models often produce verbose reasoning without a matching increase in correctness.

Why it works at the prompt level

The best mental model is constrained generation.

A direct prompt leaves the model free to produce the most likely answer string. A CoT prompt asks it to emit intermediate steps first, which changes the token-by-token path it follows. That can help in three ways:

- Task decomposition. The model handles a large problem as smaller subproblems.

- State tracking. Intermediate values stay visible long enough to influence later steps.

- Pattern reuse. Once the prompt establishes a reasoning format, the model can apply the same structure to similar inputs.

This does not make the model reliable by default. It makes failure easier to shape and sometimes easier to inspect.

That trade-off is important in production. More reasoning tokens usually mean higher latency and cost. Exposed reasoning can also look convincing while still being wrong. For teams shipping AI features, the right question is not “Does CoT work?” It is “On which tasks does CoT improve accuracy enough to justify the extra tokens, response time, and validation overhead?”

Few-shot CoT is often stronger when the task needs a specific reasoning style or domain format. It is also harder to maintain because example quality, ordering, and length all affect results. Zero-shot CoT is easier to deploy, but it tends to be less consistent on harder tasks.

The practical lesson is straightforward. CoT is not just a prompting trick. It is a controllable way to trade more tokens for better reasoning behavior on the subset of tasks where intermediate steps help.

From Theory to Practice with CoT Prompt Examples

A product team usually sees the value of CoT the first time a model gets the right answer for the wrong reason, or the wrong answer with no clue how it got there. Side by side prompt tests make that visible fast. They also show the trade-off you have to manage in production: better reasoning often costs more tokens, more latency, and more QA effort.

Use the same task across prompt styles. That isolates what the prompt is changing.

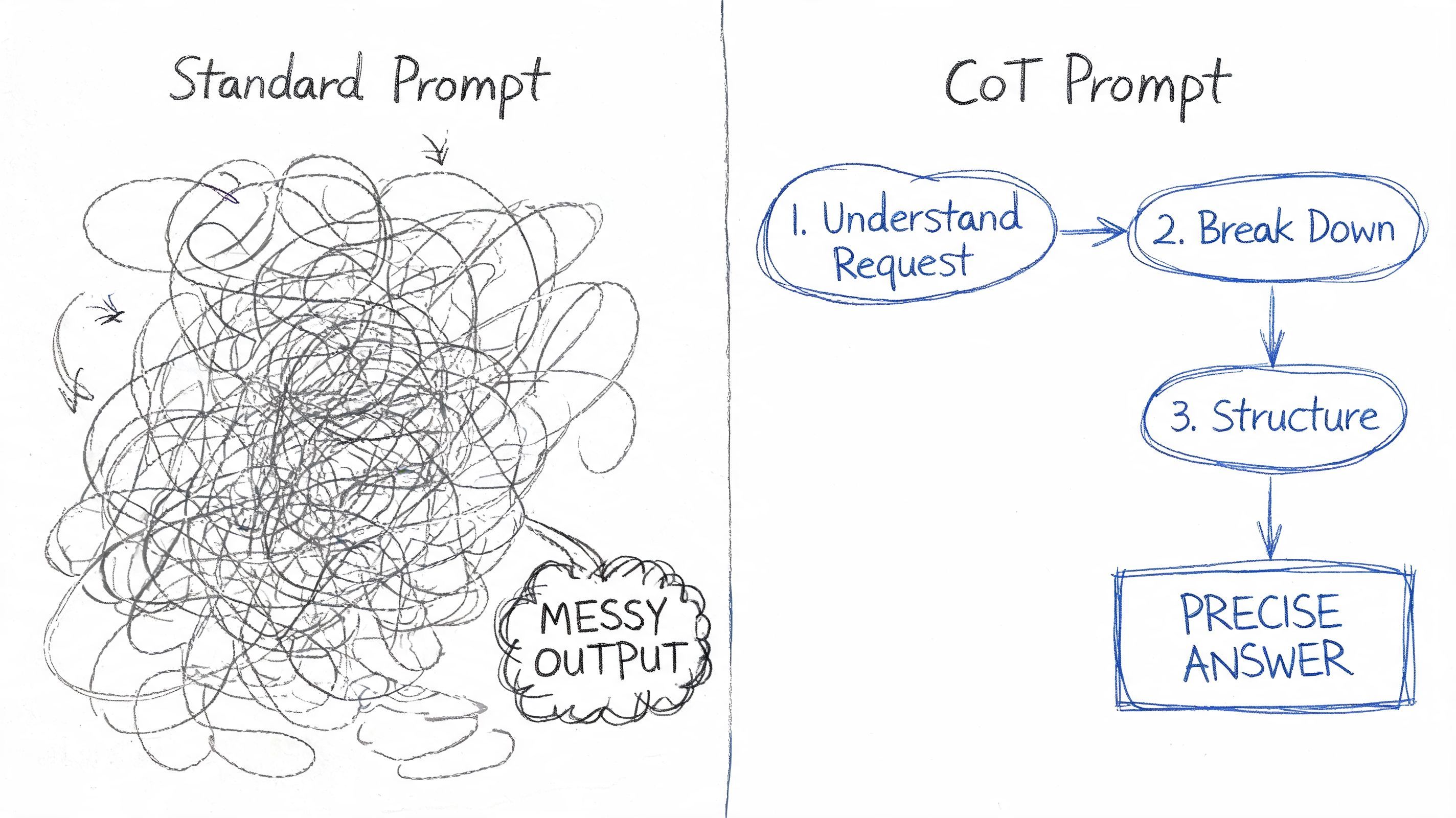

A standard prompt

A direct prompt asks for the answer and nothing else.

Prompt

A bakery makes 5 trays of cookies. Each tray holds 12 cookies. The baker gives away 8 cookies. How many cookies remain?

Typical response style

52

This works well on easy tasks and low-latency paths. The weakness shows up during debugging. If the answer is wrong, the response gives your team nothing to inspect, validate, or log beyond the final number.

A zero-shot CoT prompt

A zero-shot CoT prompt adds a reasoning instruction without providing examples.

Prompt

A bakery makes 5 trays of cookies. Each tray holds 12 cookies. The baker gives away 8 cookies. How many cookies remain? Let's think step by step.

Typical response style

5 trays × 12 cookies = 60 cookies.

60 - 8 = 52.

Final answer: 52.

This is often the fastest useful upgrade for multistep tasks. It takes little prompt engineering effort, and it can improve visibility into arithmetic, filtering, ranking, or rule-application mistakes.

It also creates a new operational problem. The model chooses its own structure. On one request it may produce clean steps. On the next, it may ramble, skip steps, or mix reasoning with the final answer in a format your parser cannot trust.

A few-shot CoT prompt

Few-shot CoT gives the model a pattern to follow, not just an instruction to reason.

Prompt

Q: Liam has 3 boxes with 4 pencils in each box. He loses 2 pencils. How many pencils does he have now?

A: First calculate the total pencils: 3 × 4 = 12. Then subtract the lost pencils: 12 - 2 = 10. Final answer: 10.Q: A teacher has 6 packs of stickers with 5 stickers in each pack. She gives 7 stickers to students. How many stickers are left?

A: First calculate the total stickers: 6 × 5 = 30. Then subtract the stickers given away: 30 - 7 = 23. Final answer: 23.Q: A bakery makes 5 trays of cookies. Each tray holds 12 cookies. The baker gives away 8 cookies. How many cookies remain?

A:

Typical response style

First calculate the total cookies: 5 × 12 = 60. Then subtract the cookies given away: 60 - 8 = 52. Final answer: 52.

This approach is usually better for repeated workflows where consistency matters as much as raw accuracy. Claims adjudication, support policy checks, invoice review, and structured transformations all benefit when the reasoning pattern stays stable across requests.

The cost is maintenance. Examples age. One weak example can pull the model into a bad format. Longer prompts also raise token spend and can push response times past what a user-facing flow allows.

Here is the practical difference:

A good walkthrough helps make the pattern concrete:

In production, the decision is usually straightforward. Start with the direct prompt and measure failure cases. Add zero-shot CoT if errors cluster around missed intermediate steps. Move to few-shot CoT when you need a stable output pattern your application can evaluate, store, or post-process reliably.

One rule helps avoid wasted effort. Do not add CoT to every endpoint by default. Use it where intermediate reasoning changes outcomes enough to justify the extra tokens and latency.

Advanced CoT Techniques for Complex Problems

A familiar production problem looks like this: the model gets a hard case right in staging, then disagrees with itself in live traffic. The issue is not basic task understanding. The issue is variance.

Advanced CoT techniques are built for that situation. They do not make reasoning free or universally better. They give teams a way to improve answer stability on tasks where a single chain is too brittle to trust.

Self-consistency as a committee approach

Self-consistency works like running several independent attempts and selecting the answer that converges.

Instead of taking the first chain at face value, you sample multiple reasoning paths and compare the final outputs. If the same conclusion appears across several runs, confidence usually goes up. This is especially useful on math, logic, extraction with tricky edge cases, and policy decisions where one early mistake can send the rest of the response off course.

I use this pattern when the model is good enough to solve the task but not stable enough to trust on one pass. That distinction matters. If the model lacks the capability, sampling more chains usually just gives you multiple polished wrong answers.

The trade-off is operational, not theoretical. Self-consistency increases token usage, response time, and infrastructure load. In a user-facing flow with a tight latency budget, that often rules it out. In batch review, background jobs, fraud checks, or queue-based approval systems, it can be a practical reliability upgrade.

A good rule is simple. Only pay for multiple chains when disagreement between runs predicts real business risk.

Auto-CoT and generated demonstrations

Manual few-shot CoT examples become expensive to maintain once the task family gets broad. Prompts drift. Edge cases pile up. Someone has to keep examples aligned with current policy, schema, and model behavior.

Auto-CoT addresses that by generating demonstrations instead of relying on a human to write every exemplar. The common pattern is to group similar questions, create representative rationales, and reuse those as guidance for future prompts. That can widen coverage across a messy task set and reduce some of the prompt-authoring burden.

It also changes the failure mode. Handwritten examples usually fail because they age badly or overfit to a narrow slice of requests. Generated examples can fail because they encode weak reasoning at scale. Teams need review steps, regression tests, and a way to retire bad exemplars quickly.

The core trade-off remains the same as noted earlier. You may save manual prompt work, but you still pay in latency and added system complexity.

Where these techniques fit in production

Advanced CoT earns its keep in a narrow set of cases:

- High-consequence decisions where a wrong answer triggers manual review, user frustration, or downstream correction work

- Asynchronous workflows where extra seconds of latency do not hurt the product experience

- Measured instability where evaluation runs show meaningful variance across single-pass outputs

- Broad task families where maintaining fixed few-shot examples becomes a recurring operational burden

Outside those cases, advanced CoT is usually the wrong optimization target. A tighter prompt, better retrieval, stronger constraints, or a deterministic post-processing layer often delivers more value per token.

That is the product lesson teams learn after deployment. Better reasoning techniques help, but only when they fit the cost, latency, and reliability envelope of the system around them.

The Hidden Costs and Failure Modes of CoT

A team ships a reasoning prompt, sees better answers in testing, and assumes the feature is ready. Then production traffic hits. Response times climb, token spend spikes, and support reviews uncover a harder problem: the model is explaining wrong answers with confidence.

CoT changes the operating profile of a system. It can improve multistep reasoning on the right tasks, but it also increases output length, adds latency, and creates new failure surfaces that are easy to miss in a prompt playground.

Where reasoning chains go wrong

The common failure mode is plausible but incorrect intermediate reasoning. One bad step early in the chain can steer every later step off course, while the final answer still sounds careful and well supported.

Longer chains also create more places for the model to drift. It may introduce assumptions that were never in the prompt, mix retrieved facts with fabricated ones, or overfit to the format of a worked example instead of the actual task. In production, that shows up as answers that look more trustworthy than they are.

That distinction matters for product teams. Users and reviewers often give extra credit to a verbose explanation, even when the explanation contains the error.

The cost problem is usually larger than the quality gain

The second issue is operational. CoT often expands the number of output tokens and extends time to first useful answer. On async workflows, that may be fine. On a user-facing path, it can be the difference between a feature that feels responsive and one that feels slow.

As noted earlier, recent benchmarks on newer models show a mixed picture. Some models gain a little from explicit CoT. Others gain very little or even regress. The latency penalty, however, is often obvious.

That is why teams should stop asking, "Does CoT help?" and start asking four narrower questions:

A small quality gain can be worth the cost in back-office review tools, claims workflows, or research assistants. The same gain is often a poor trade in chat, search, onboarding, and other latency-sensitive experiences.

Why model choice changes the recommendation

Older and smaller models often benefit more from explicit reasoning structure. Newer reasoning-capable models may already do enough internal decomposition without being told to spell it out step by step.

That creates a practical trap. A prompt pattern that helped on one model can become redundant on the next model upgrade. If a team never retests, they keep paying the latency and token bill after the benefit has disappeared.

The safe default is simple:

CoT is not a default setting. It is a performance trade-off. The right decision depends on model behavior, task complexity, latency budget, and how costly a wrong but well-explained answer is in the product.

Productizing CoT Prompts Safely and Effectively

A prompt that looks great in a demo can still fail in production. The failure usually shows up in three places first: higher token spend, slower responses, and answers that sound well-reasoned while still being wrong.

Teams that ship CoT reliably treat prompts the same way they treat models, APIs, and feature flags. They keep prompts out of application code, version them, test them against baselines, and make rollback cheap.

Treat prompts like production assets

Hardcoding a CoT prompt into the app creates avoidable operational friction. Every wording change becomes a deploy. Version comparison gets messy. Incident review is harder because logs rarely capture the full prompt, model settings, and output together.

A better setup includes a prompt registry, version history, staged rollout controls, and per-call traces. CoT prompts benefit from this more than simple instruction prompts because small wording changes can affect output length, reasoning style, and failure behavior.

Store more than the prompt text. Store the model, temperature, max token limits, input schema, expected output shape, and the evaluation results that justified the prompt version in the first place. Without that context, teams end up arguing about preferences instead of looking at evidence.

Evaluate CoT against a baseline, not against intuition

The first production mistake is assuming that more reasoning text means better reasoning. It often does not.

Run side-by-side tests between a direct prompt and a CoT prompt on the same task set. Review three things together:

- Final answer quality

Did CoT improve accuracy or task completion in a way users would notice? - Latency

Did the extra reasoning push the response outside the product's acceptable wait time? - Token usage

Did the prompt and output grow enough to change unit economics?

As noted earlier, explicit reasoning can also introduce failure modes of its own, including error propagation and long, low-value outputs. That is why manual review still matters. Aggregate scores can hide a prompt that performs well overall but fails badly on a specific workflow, customer segment, or document type.

Build controls for the failure modes you can predict

CoT should be configurable, not universal. In production, the safer pattern is task-based routing.

Use direct prompting for short-form tasks, low-latency surfaces, and cases where the answer is easy to verify. Reserve CoT for tasks that are multi-step, expensive to get wrong, or reviewed by an operator who benefits from seeing the model's working process.

A practical rollout checklist:

- Create a direct-prompt baseline before adding any reasoning scaffold

- Test zero-shot and few-shot versions separately because examples can help quality but also increase cost and drift

- Set output limits so verbose reasoning does not expand without bound

- Review failure cases by hand instead of relying only on summary metrics

- Route by task type or risk level rather than forcing one prompt pattern across the whole product

- Keep rollback immediate so a bad prompt version can be disabled without an app release

One more production lesson matters here. Model upgrades change the value of CoT. A prompt that improved results on one model can become unnecessary, or even harmful, on the next. Re-run evaluations after model changes, pricing changes, and context window changes. If the gain disappears, remove the reasoning scaffold and take the latency and cost savings.

The goal is operational control. Teams need to know where CoT improves outcomes, where it creates drag, and how to change that behavior safely.

If you're building AI features and want a cleaner way to manage prompt versioning, routing, logs, latency, and per-call cost visibility, Supagen is worth a look. It gives teams a production layer for AI apps so you can test CoT against direct prompts, inspect failures, and update prompt behavior without hardcoding everything into your application.